I’m grinding on a personal project that will require a lot of 3D assets, meshes that will be textured and exported to a game engine. In this post I’m sharing a python script that can be put into a Houdini shelf tool and executed to give a starting point, without having to memorize all the different parameters that need to be set.

Here’s it is, I’ll explain more after the code block:

# --- Modules

import datetime # Console timestamping

# --- Variables

# Vertical node graph spacing

vertOffset = hou.Vector2(0, -1.05)

vertOffsetSum = hou.Vector2(0, 0)

# Stores node object references, paths

assetNodeRefs = [] # Stores node object references

assetNodePaths = [] # Stores full tree paths of nodes

# --- Functions

# Utility that spits out all the parameters for a given node

def dumpParms(myNodePath):

tempNodeParms = hou.node(myNodePath).parms()

# Iterate and print them out

for idx, param in enumerate(tempNodeParms):

if idx == 0: # Print node path once

print("For node: " + myNodePath)

tempValue = param.eval()

tempName = param.name()

print("Name: " + tempName + " [ " + str(tempValue) + " ]") # Some need to be cast str

return

def makeNode(myPath, myType):

# Debug

print("Making node at: " + myPath + " Type: " + myType)

# Assign node reference

tempNodeRef = hou.node(myPath).createNode(myType)

# Keeping track of node object references in makeDefaultAsset function

#spawnedNodes.append(tempNodeRef) # Original - Deprecated

# Get created node path

tempNodePath = tempNodeRef.path()

return tempNodeRef, tempNodePath

# I don't like the X, Y offset for automatic layout, just want a Y offset for created nodes

def adjPosition(myNodeRef, myOffset): # Takes node reference, applies Y offset

# Global var for sum

global vertOffsetSum

# Get current node position

tempNodePos = myNodeRef.position() # [X, Y]

# Apply Y offset

offsetNodePos = hou.Vector2(0, (tempNodePos[1] + vertOffsetSum[1]))

# update offset sum

vertOffsetSum = (0, (vertOffsetSum[1] + myOffset[1]))

# Apply cumulative offset position to node

myNodeRef.setPosition(vertOffsetSum)

return offsetNodePos

# Takes two node object references

def wireNodes(nodeFromRef, nodeToRef): # Assumes first input index, first output index

# Make connection

nodeFromRef.setInput(0, nodeToRef, 0)

return

def getNodeRef(myNodeName): # Takes spawned node name, returns object reference if matched

tempNodeObj = None

for nodePath in assetNodePaths: # Using global list of stored spawned node paths

if hou.node(nodePath).name() == myNodeName: # Check node name - matches?

tempNodeObj = hou.node(nodePath) # Then get node object reference

return tempNodeObj

# First element is top "root" node of the structure - for more complex topologies, you'll have

# to make changes to this code, including my assumptions about created node names

# Nodes are created in the order listed, left to right, using node type names

assetNodeList = ['geo', 'box', 'groupcreate', 'xform', 'normal', 'uvunwrap', 'attribcreate',

'merge', 'uvlayout', 'material', 'groupcreate', 'output', 'rop_fbx']

# Parameter Settings Keys/List - Access syntax nodeParamsList[0]['nodename'][0]['paramkey']

# Yes, this assumes that the node name is the first ever created (nodename1), and for my uses

# it will be - this would require more thorough checking if that assumption isn't true

#

# Hovering your mouse cursor over a parameter in the Houdini node details pane provides the

# referenced parameter name in its pop-up, which is used below - or dump a node's parameters

# using my dumpParms(yournodetreepath) helper function I've provided

nodeParamsList = [{

'attribcreate1' : [

{'name1':'path'}

],

'uvlayout1' : [

{'correctareas' : 1}, {'axisalignislands' : 2}, {'scaling' : 1}, {'scale' : 1},

{'rotstep' : 0}, {'packbetween' : 0}, {'packincavities' : 1}, {'padding' : 1}, {'paddingboundary' : 1},

{'expandpadding' : 0}, {'targettype' : 1}, {'usedefaultudimtarget' : 1}, {'defaultudimtarget' : 1001},

{'tilesizex' : 1}, {'tilesizey' : 1}, {'numcolumns' : 10}, {'startingudim' : 1001}, {'stackislands' : 0}

],

'group2' : [

{'groupname' : 'rendered_collision_geo_ucx'}

],

'rop_fbx1' : [

{'sopoutput' : 'Mesh_AddPathChangeThisName.fbx'}, {'mkpath' : 1}, {'buildfrompath' : 1}, {'pathattrib' : 'path'},

{'exportkind' : 0}, {'sdkversion' : ' '}, {'vcformat' : 0}, {'invisobj' : 0}, {'axissystem' : 0},

{'convertaxis' : 0}, {'convertunits' : 1}, {'detectconstpointobjs' : 1}, {'exportendeffectors' : 0},

{'computesmoothinggroups' : 1}

]

}]

shaderParamsList = [{

'principledshader1' : [

{'basecolorr' : 1.0}, {'basecolorg' : 1.0}, {'basecolorb' : 1.0}, {'albedomult' : 1.0},

{'basecolor_usePointColor' : 0}, {'basecolor_usePackedColor' : 0}, {'rough' : 1.0}, {'metallic' : 1.0},

{'reflect' : 1.0}, {'baseBumpAndNormal_enable' : 1}, {'baseNormal_vectorSpace' : 'uvtangent'}

]

}]

# Note that on the ROP FBX node converting units is disabled

standinParamsList = [{

'polyreduce1' : [

{'percentage' : 50}

],

'rop_fbx2' : [

{'sopoutput' : 'Mesh_AddPathChangeScaleProxyName.fbx'}, {'mkpath' : 1}, {'buildfrompath' : 1}, {'pathattrib' : 'path'},

{'exportkind' : 0}, {'sdkversion' : ' '}, {'vcformat' : 0}, {'invisobj' : 0}, {'axissystem' : 0},

{'convertaxis' : 0}, {'convertunits' : 0}, {'detectconstpointobjs' : 1}, {'exportendeffectors' : 0},

{'computesmoothinggroups' : 1}

]

}]

def makeDefaultAsset(): # Make nodes based on node list of types

objRootPath = '/obj' # Root path for first geo node

matRootPath = '/mat' # Root path for material principle shader nodes

childPath = ''

# Create root, then child nodes

for idx, nodeType in enumerate(assetNodeList):

if idx == 0: # Root node?

tempNodeRef, tempNodePath = makeNode(objRootPath, assetNodeList[idx])

assetNodeRefs.append(tempNodeRef)

assetNodePaths.append(tempNodePath)

childPath = tempNodePath # Assign root path

else: # Child of root node, use root node path

tempNodeRef, tempNodePath = makeNode(childPath, assetNodeList[idx])

if idx > 1: # Start wiring nodes when we're at second child node inside root

# This assumes input index 0, from first output

tempNodeRef.setInput(0, assetNodeRefs[idx-1], 0)

assetNodeRefs.append(tempNodeRef)

assetNodePaths.append(tempNodePath)

# Iterate Nodes Parameter List and set parameters accordingly

for nodeName in nodeParamsList[0]:

# Get node object reference for spawned node name

tempObjRef = getNodeRef(nodeName)

# If we have an object reference, set parameter(s)

if tempObjRef is not None:

# Iterate through parameters and set them

for idx, setting in enumerate(nodeParamsList[0][nodeName]):

# Debug

#print("For node name: " + nodeName + " Setting " + str(idx) + " is: " + str(nodeParamsList[0][nodeName][idx]))

tempObjRef.setParms(nodeParamsList[0][nodeName][idx])

# Now adjust positions of all nodes

for nodeRef in assetNodeRefs:

adjPosition(nodeRef, vertOffset)

# Create Principle Shader Node in /mat context

shaderNodeRef, shaderNodePath = makeNode(matRootPath, 'principledshader')

# May not make sense to set all parameters here - but I have the full list archived in the project folder

# Set node name

shaderNodeRef.setName("mat_changethisname")

# Additional setup parameters

for idx, setting in enumerate(shaderParamsList[0]['principledshader1']):

# Debug

#print("For principled shader - Setting " + str(idx) + " is: " + str(shaderParamsList[0]['principledshader1'][idx]))

shaderNodeRef.setParms(shaderParamsList[0]['principledshader1'][idx])

# Assign to 'material1' '/obj' node object ref index [9] using 'materialpath1' parameter

assetNodeRefs[9].setParms({'shop_materialpath1' : '/mat/mat_changethisname'})

# Create two more nodes for 'stand in' objects used as scale proxies when constructing scenes/levels

polyReduceRef, polyReducePath = makeNode(childPath, 'polyreduce') # Reduce polygons

rop_fbx2Ref, rop_fbx2Path = makeNode(childPath, 'rop_fbx') # Another ROP fbx output

# Set polyreduce params, fbx params

for idx, setting in enumerate(standinParamsList[0]['polyreduce1']):

# Debug

#print("For node name: polyreduce1 " + " Setting " + str(idx) + " is: " + str(standinParamsList[0]['polyreduce1'][idx]))

polyReduceRef.setParms(standinParamsList[0]['polyreduce1'][idx])

for idx, setting in enumerate(standinParamsList[0]['rop_fbx2']):

# Debug

#print("For node name: rop_fbx2 " + " Setting " + str(idx) + " is: " + str(standinParamsList[0]['rop_fbx2'][idx]))

rop_fbx2Ref.setParms(standinParamsList[0]['rop_fbx2'][idx])

# Connect polyreduce1 to output of material1, connect rop_fbx2 to output of polyreduce1

wireNodes(polyReduceRef, assetNodeRefs[9]) # From node, To node - check function for labeling consistency

wireNodes(rop_fbx2Ref, polyReduceRef)

# Custom positioning using X and Y offset for these two nodes - using standInOffset:

collNodeRef = hou.node('/obj/geo1/group2') # Get group2/collision mesh node X, Y position [0, -11.55]

standInVertOffset = collNodeRef.position()

polyNodePos = hou.Vector2(-3.0, standInVertOffset[1])

rop_fbx2Pos = hou.Vector2(-3.0, (standInVertOffset[1] + (vertOffset[1] * 2)))

# Set positions

polyReduceRef.setPosition(polyNodePos)

rop_fbx2Ref.setPosition(rop_fbx2Pos)

return

# --- Main Exec

# Clear console a bit

print('\n' * 4)

# Timestamp Banner

timeStamp = datetime.datetime.now()

print("\n ----------[ TallTim - Default Asset Node Generator Exec: " + str(timeStamp) + " ]---------- \n")

# Generate Default Geometry Asset for Unreal Engine Export As FBX, With Collision Mesh

makeDefaultAsset()

#dumpParms('/obj/geo1/uvlayout1) # Get parameters

# set names by <nodeRef>.setName('myName')

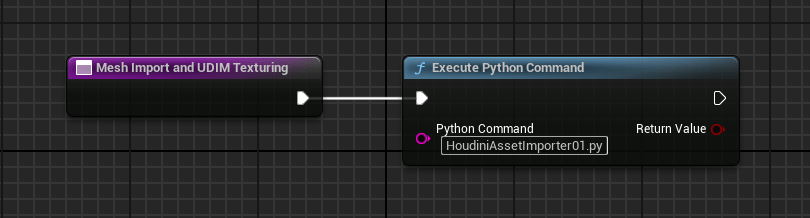

Here’s the result, a generated network of nodes that takes less than a second:

I’ll step through why each node is there, including why I have two FBX output nodes – which may seem confusing at first, but it will make sense, I promise.

Keep in mind that this is in the path or context of Houdini’s ‘/obj’ level – while it is entirely possible to make other assets with this automatically, my first use was to create a ‘/obj/geo’ node with all its sub-nodes so I could start modeling something right away.

From the top down (I’m omitting numbers for most of them since Houdini puts a ‘1’ after the first instance of a node.):

- Box – This is a ‘primitive’ type in Houdini, which just creates a 6-sided cube. I’ll typically replace this with other things, curves, swept extrusions, whatever – the box is just there as a stand-in.

- Group – I like keeping things orderly, so for multi-mesh parts I will make group names for them which makes it easier to refer to if I need to do any specific actions on them later.

- Transform – Not absolutely necessary, but may be needed to place the object on the ‘ground’ construction plane.

- Normal – After the polygons have been created, I find it useful to have normals applied, since later UV mapping and texturing works much better if everything is uniform.

- UV Unwrap – This prepares the mesh for a later step when it comes to texture mapping.

- Attribute Create – This allows me to create a ‘path’ value that tells the FBX exporter my object consists of multiple meshes, very handy when using Substance Painter, since you can then easily mask and select individual parts.

- I just realized that my code example doesn’t set these parameters entirely (always something, there’s a lot of moving parts) – but the “Class” setting needs to be “Primitive” and “Type” needs to be “String”. Once this is set, you can type in something like: “intro_basic_monitor/monitor_frame” — where the first part is the ‘root’ model name, and the latter is the part name. Really helps later down the line.

- Merge – This is where you’d combine all of your parts using the nodes described so far. I left this in because I rarely make anything that is just one single part.

- UV Layout – Here’s where the meat of setting up texture mapping happens. I’m using UDIMs, a method to spread high resolution textures over a larger texture space, but this would still be necessary if you were using regular texturing methods. Setting parameters here automatically really saves time.

- Material – This node assigns your texture, which lives under the ‘/mat’ context – yes, this script automagically created a material shader for you too. You’ll have to rename the material and such, but helps to have it set up already.

- You’ll notice that there’s a branch ‘split’ here – and I’ll explain briefly why. The ‘rop_fbx1’ node is my high-resolution output mesh. The ‘rop_fbx2’ node is used for ‘proxies’ that I create so I can assemble a large scene/level in Houdini without copying their associated node netorks, polyreduced and referencing a FBX file. This keeps overhead low and allows me to work on a new asset for a scene without using up a lot of CPU/GPU to do it. May not matter if you have a beast of a rig, but for me I know my scenes will have a lot of things in them, so I’m getting ahead of that now.

- The next two nodes are related to Unreal Engine and collision meshes used in the physics engine.

- Group2 – The name ‘rendered_collision_geo_ucx’ tells UE that it should create a collision mesh that matches the following node.

- Output – This node is how UE understands the object geometry and allows it to create a collision mesh on import. You can customize these, but I haven’t attempted that yet.

- rop_fbx1 – As I described above, this node saves a high-resolution mesh to a path specified, with the proper parameters. You’ll have to specify the path yourself, its set to some dummy value here.

Another note about this python script – the ‘assetNodeList’ variable assumes that the FIRST node is the ‘root’ under the ‘/obj’ context. The rest of the nodes are children of this ‘root’ node. If you wanted to make a different asset using this, you’d have to change how I detect/handle the root node type, but its totally doable with a few small alterations.

That’s it for now, quite a long post. I’ll post more as I get time.